Why You Should Have a Robots.txt File and How to Generate It by File2File

What is Robots.txt?

A robots.txt file is a simple text file placed in the root directory of your website that instructs web crawlers (like Googlebot) on how to crawl and index your site. It plays a crucial role in controlling access to specific parts of your site, ensuring sensitive or irrelevant pages aren’t indexed by search engines.

Why You Should Have a Robots.txt File

- Improves SEO: Prevents search engines from wasting crawl budget on unimportant pages.

- Protects Sensitive Content: Blocks private or under-construction areas from being crawled.

- Enhances Performance: Focuses crawling resources on essential pages.

How to Generate Robots.txt in Popular Frameworks

1. Node.js:

- Create a file named

robots.txtin the root folder. - Use

fsto serve it:

const fs = require('fs');

app.get('/robots.txt', (req, res) => {

res.type('text/plain');

res.send(fs.readFileSync('./robots.txt'));

});

2. React:

- Place

robots.txtin thepublicfolder. React automatically serves it.

3. Vue:

- Add

robots.txtto thepublicfolder. Vue CLI will handle serving it.

4. Next.js:

- Use API routes:

export default function handler(req, res) {

res.setHeader('Content-Type', 'text/plain');

res.send('User-agent: *\nDisallow: /private');

}

5. WordPress:

- Use a plugin like Yoast SEO or create a custom file in the root directory.

6. Django:

- Serve

robots.txtusing a view:

from django.http import HttpResponse

def robots_txt(request):

content = "User-agent: *\nDisallow: /private/"

return HttpResponse(content, content_type="text/plain")

7. Flask:

- Add a route:

@app.route('/robots.txt')

def robots_txt():

return "User-agent: *\nDisallow: /private", 200, {'Content-Type': 'text/plain'}

Recent Posts

Article

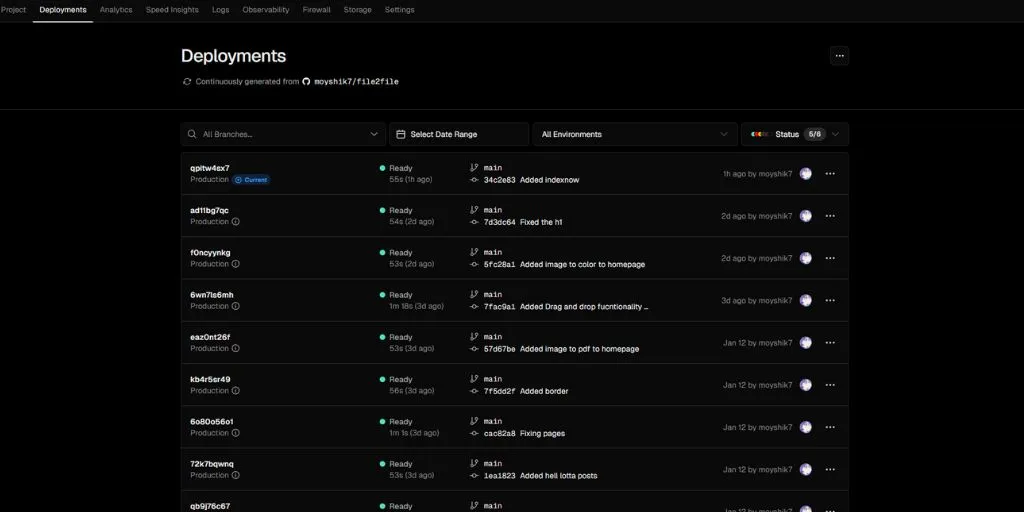

Hosting E-commerce Websites on Vercel

Article

Vercel vs Netlify: Which is Better?

Article

How to Set Up Custom Domains on Vercel: A Comprehensive Guide

Article

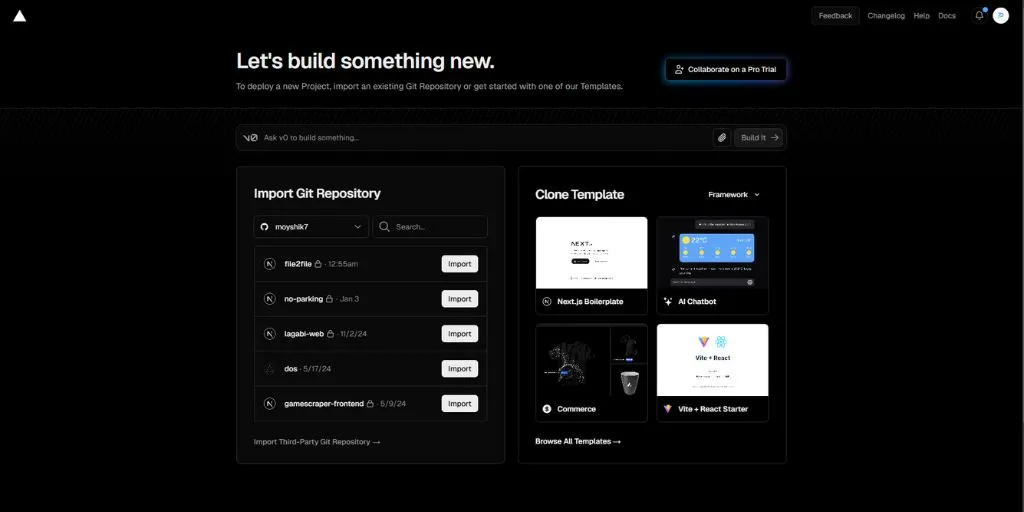

Getting Started with Vercel Hosting: A Step-by-Step Guide

Article

Como Migrar um Aplicativo para Vercel

Article

How to Migrate an App to Vercel

Article

How to Manage Environment Variables on Vercel for Secure Confi...

Article